Hackers Are Using AI to Create Smarter, Harder-to-Detect Malware

Want more insights like this?

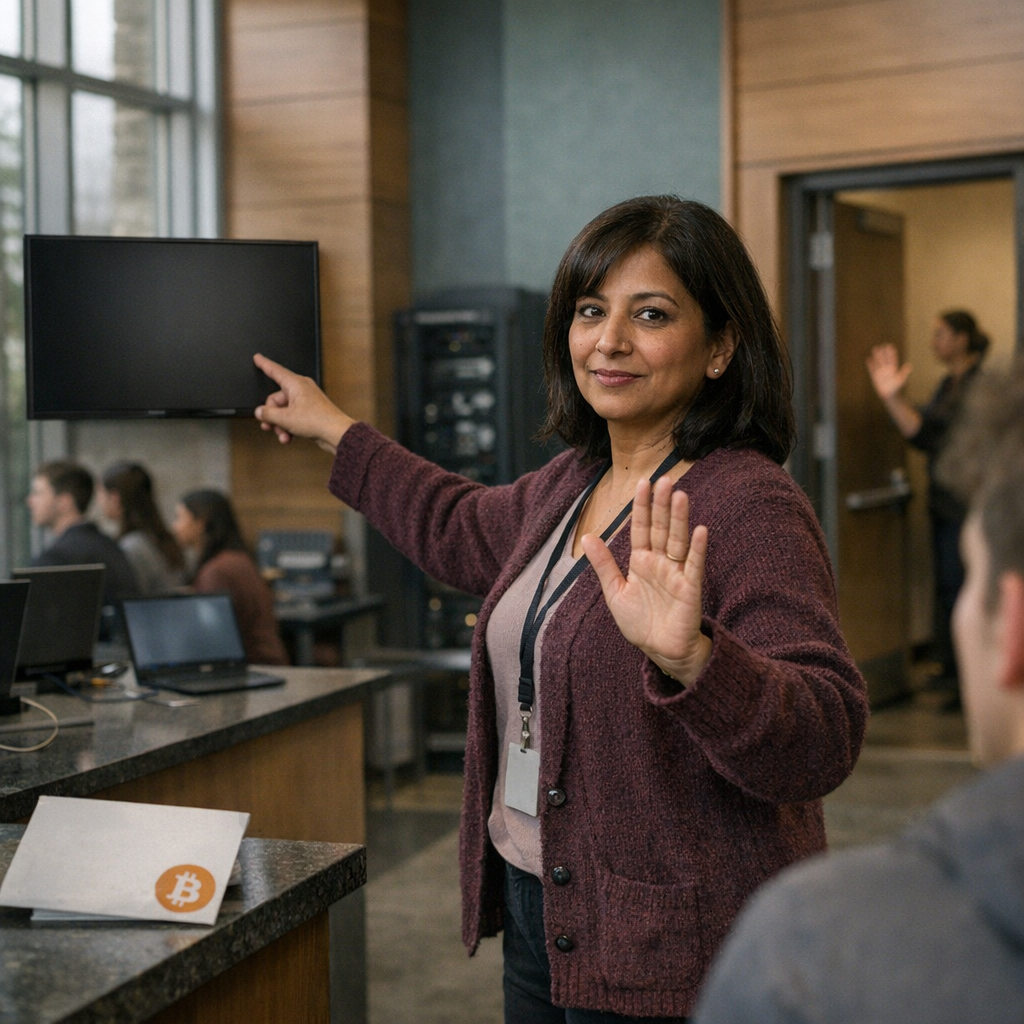

Cybercriminals are now weaponizing large language models like Google Gemini and Hugging Face to build malware that can evade security tools. Google's Threat Intelligence Group identified five programs, including PROMPTFLUX, which uses AI to rewrite its own code, and PROMPTSTEAL, which analyzes compromised systems for vulnerabilities.

These AI-powered tools help both skilled hackers work faster and enable less technical criminals to create sophisticated attacks. Some malware calls AI services during execution to adapt and stay unpredictable, though most samples are still experimental prototypes.

Attackers are bypassing AI safety guardrails by pretending they need offensive code for cybersecurity competitions. While these techniques aren't widespread yet, experts warn they could make future attacks much more adaptive and difficult to defend against.

Source: Dark Reading