Hackers Can Now Hide Malware Inside AI Files on Windows

Security loophole found: Malware can bypass Windows defenses by hiding in trusted AI model files. Learn how to protect yourself.

By

Content Team

ON THIS PAGE

Want more insights like this?

Subscribe to our newsletter to get the latest software protection strategies delivered to your inbox.

By submitting your email, you consent to Codekeeper contacting you and agree to our privacy policy.

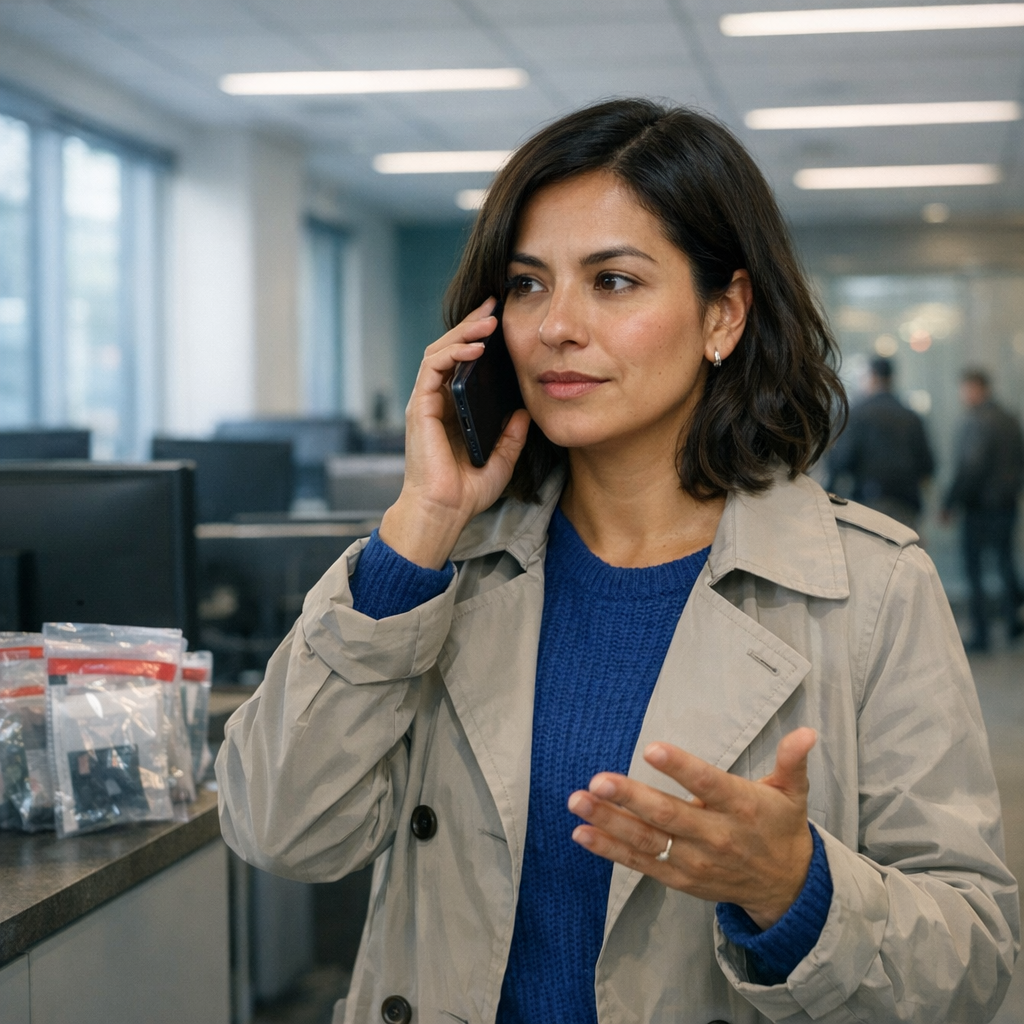

Security researcher hxr1 discovered a new way to sneak malware past Windows defenses by hiding it inside AI model files. The attack exploits Windows' built-in AI features, which automatically trust ONNX neural network files used by apps like Windows Hello and Office.

Since Windows doesn't check these AI files for threats, attackers can embed malicious code in the model's data and use Microsoft's own trusted system files to execute it. Security programs see legitimate AI processing instead of a cyberattack.

The researcher suggests this highlights a major blind spot as AI becomes more common. Security tools need updates to scan AI files, and users shouldn't blindly trust AI models downloaded from the internet.

Source: Dark Reading

Have questions about protecting your software?

Our escrow experts are standing by to help.

Book a free demo